AI-Assisted Threat Modeling: What Auspex Gets Right and What Still Requires Human Judgment

Author: Juhie Chandra

AI-assisted threat modeling is not a new idea, but is often evaluated on the wrong axis. AppSec teams experiment with prompts that generate threat lists, STRIDE tables, or example attack scenarios from a system description. The tooling is easy to try, and the initial results are encouraging too.

Evaluation then tends to focus on output quality. Can the model generate better threats, identify obscure attack paths, or apply frameworks more consistently? When these outputs disappoint, the conclusion drawn is usually that the model is not sophisticated enough or that security reasoning remains beyond automation.

But this framing misses a more fundamental constraint: the absence of a stable, shared understanding of what exists, how components interact, and where boundaries lie. Without this foundation, large AppSec programs struggle to produce consistent and reliable results. Threat reasoning, human or automated, has very little to stand on.

That is why JPMorgan Chase’s Auspex work is worth examining. Auspex shows a practical way to use AI in threat modeling without losing the reasoning that makes the exercise useful. It is interesting precisely because it is conservative in where it applies automation and explicit about where it does not.

At its core, Auspex applies AI to the parts of threat modeling that are time-consuming, repetitive, and hard to standardize while leaving judgment-driven risk reasoning to humans.

Executive Summary

What Auspex gets right

AI fails at threat modeling when systems are poorly understood. Auspex treats system legibility as an explicit phase. AI is used to normalize inputs, infer structure, reconcile ambiguity, make scope and assumptions explicit, and prepare context instead of taking risk decisions

Threat reasoning is role-based, preserving diverse security perspectives instead of flattening them

What still requires humans

Resolving ambiguity in architecture and assumptions

Prioritizing threats and accepting risk

Making trade-offs between controls, usability, and business context

Defining in scope and out of scope elements for Threat Modelling

Why AI-Assisted Threat Modeling Struggles in Practice

Most AI-driven threat modeling efforts fail for a predictable structural reason. They attempt to move directly from a partial system description to a list of threats.

A common assumption is that the problem can be solved by providing more input. Teams pass architecture diagrams, design documents, README files, and system overviews into a model and expect a better threat model to emerge.

The issue is the absence of a coherent, threat-model-ready system description. Architecture diagrams are approximations. Documentation lags reality. Ownership boundaries are blurry. Two engineers can look at the same diagram and disagree on what a component actually does, which data flows are real, or whether a boundary is logical or merely organizational. These ambiguities are normal in real systems, but they are fatal to automated reasoning if left unresolved.

Experienced security engineers recognize this immediately. In real threat modeling sessions, most of the time is not spent debating threats.

It is spent aligning on what the system is: what the boxes represent, which data flows actually exist, which integrations are active, which environments matter, and what is intentionally excluded from analysis. Senior security engineers often spend more effort reconciling diagrams and assumptions than discussing threats.

When AI is asked to generate a threat model directly from raw or aggregated documentation, it is effectively being asked to perform three tasks at once:

Infer a consistent system model

Decide scope and assumptions

Reason about threats

This is where AI-generated threat models usually fail. The resulting output often looks generic because the system description was never sufficiently legible to support meaningful reasoning. Threats become broad, interchangeable, and weakly grounded because the context they depend on was implicit, contradictory, or missing.

Before threat reasoning begins, the system needs to be decomposed into identifiable components and assets. In-scope and out-of-scope elements must be made explicit. Trust boundaries, deployment assumptions, and usage patterns need to be stated rather than implied.

This preparatory step is usually performed informally by senior security engineers. It is rarely documented as a distinct phase, even though it consumes a significant portion of the effort and determines the quality of everything that follows.

But Auspex Is Worth Paying Attention To

Auspex does not attempt to fix threat modeling by improving the model. It changes the shape of the process. Instead of treating threat modeling as a one-shot generation task, Auspex formalizes something senior security engineers already do instinctively: they spend time understanding the system before reasoning about threats.

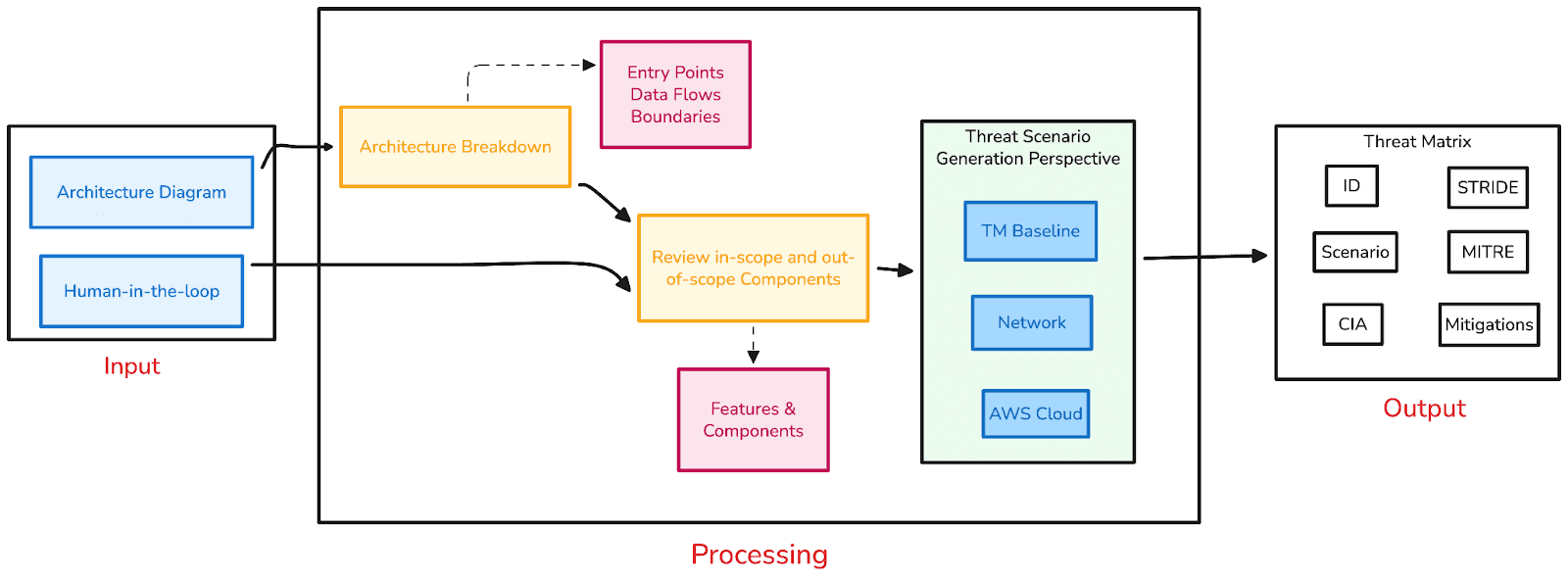

With a prompt chaining technique, it mimics the thought process of security engineers, threat modeling is structured as a two-stage pipeline.

Stage 1: Understanding the system. Converting the uploaded architectural descriptions into detailed components and re-iterating with users on the same (adding human-in-the-loop).

Stage 2: Generating threat matrix after passing system detailed context and perspective from which threat modelling is being conducted.

This mirrors how experienced AppSec practitioners actually work, even if they do not always describe it formally.

Pillar 1: System Legibility Is the Real Scaling Constraint

When AI is asked to “generate a threat model” prematurely, it produces generic output not because the threats are wrong, but because the system was never clearly understood.

The first and most important shift in Auspex is treating system understanding as a first-class problem. Rather than framing AI as a threat modeling engine, Auspex starts with system legibility as a first-class concern.

Given text notes, trusted system data, or architecture diagrams, AI is used to:

Normalize inconsistent descriptions

Infer missing structure

Identify applications, components, and data flows

Resolve scope boundaries

Produce a consolidated system description

Anyone who has facilitated real threat modeling sessions recognizes how much time is consumed here.

Auspex moves this effort earlier and makes it repeatable. Conceptually, this resembles approaches that decompose systems into assets and components before analysis. The goal is not insight yet, but shared understanding.

If system decomposition in your current threat modeling process is implicit rather than explicit, that ambiguity often propagates downstream into weaker and less defensible threat analysis.

Pillar 2: Threat Reasoning Through Explicit Security Roles

Once a system is legible, Auspex applies AI to threat reasoning but with an important constraint.

Role-based reasoning. Instead of asking the model to reason generically “as a security expert,” Auspex simulates specific security roles and asks each role to interrogate the same system description.

A network-focused perspective surfaces different concerns than an application-focused one not because one is superior, but because each carries different assumptions and priorities. This reflects how real threat modeling works in practice: expertise shapes attention.

The resulting threat scenarios are labeled using established taxonomies such as CIA and STRIDE and organized into structured threat matrices with corresponding mitigations.

This avoids a common failure mode in AI-generated threat models: flattened lists that treat all risks as interchangeable. Real threat modeling is not monolithic. Different experts notice different things, and Auspex preserves that diversity instead of smoothing it away.

Pillar 3: Prompts as Interfaces, Not Conversations

Auspex describes its approach as tradecraft prompting, but the underlying idea is more familiar than the terminology suggests. Rather than issuing a single open-ended prompt such as “generate a threat model for this system,” Auspex breaks the work into discrete steps with explicit input and output contracts.

One prompt produces a stable system description. Another consumes that description and reasons about threats from a specific perspective. Outputs are labeled, structured, and bounded.

This design choice enforces discipline. The model is not being asked to “think like a security engineer” in the abstract. It is being asked to perform specific transformations within known boundaries. Many unsuccessful AI threat modeling experiments treat prompts as conversations. Auspex treats them as interfaces.

In practice, this means the system behaves more like a function than a chatbot. Given a known input shape, it produces a predictable class of output. The model fills in details, but the boundaries are owned by the designers. That discipline is what allows the approach to scale without becoming brittle or overly generic.

What This Approach Does, and Does Not Change

Auspex does not attempt to automate threat modeling end to end. It mechanizes only the parts of a senior security engineer’s workflow that are structured, repeatable, and setup-heavy, while deliberately preserving human judgment.

AI is used to normalize fragmented inputs into a coherent system description, decompose systems into components and assets, enumerate baseline threat scenarios once context is clear, and apply standard taxonomies to produce structured, inspectable outputs. It prepares the ground for reasoning, but does not perform the reasoning itself.

What AI does not do is decide which threats matter most, resolve ambiguity, accept or reject risk, or claim completeness. Those responsibilities remain human, enforced by design rather than policy: AI produces scenarios and labels, not decisions.

Instead of debating obvious threats, sessions focus on assumptions, trade-offs, and edge cases. Instead of treating threat modeling as a heavyweight ritual, it becomes something that can be initiated more often and refined over time.

Implications for AppSec Teams

There are several practical lessons here.

First, pre-processing matters more than model choice. Better system representations consistently produce better threat reasoning. Second, role-aware reasoning scales better than generic threat lists. Multiple expert perspectives do not require multiple humans, but they do require structured prompting.

Third, AI-assisted threat modeling works best as a capability, not a one-shot feature. It should be treated like a function with defined inputs, transformations, and outputs, not a button labeled “Generate Threat Model.”

There are fair critiques as well. Without public data on whether threat scenarios are synthetic or derived from real incidents, external validation is difficult. Still, internal validation by senior security teams indicates that the approach is operationally useful.

If an AI-generated threat model is consistently good enough to start meaningful conversations, it is worth questioning whether perfection is actually the bar that matters.

Closing Perspective

When threat modeling becomes cheaper to initiate and easier to repeat, teams can do it more often, focus sessions on what is unclear rather than what is obvious, and escalate only when the risk justifies deeper analysis. That is how mature programs scale without exhausting their security teams.

In practice, threat modeling does not fail to scale because teams cannot generate threats; it fails because system understanding and risk judgment require time that does not scale linearly.

That is why Auspex is worth paying attention to.